I have…

- [ ] Checked the logs and have provided the logs if I found something suspicious there

I’m submitting a…

- [ ] Regression (a behavior that stopped working in a new release)

- [X] Bug report

- [ ] Performance issue

- [ ] Documentation issue or request

Current behavior

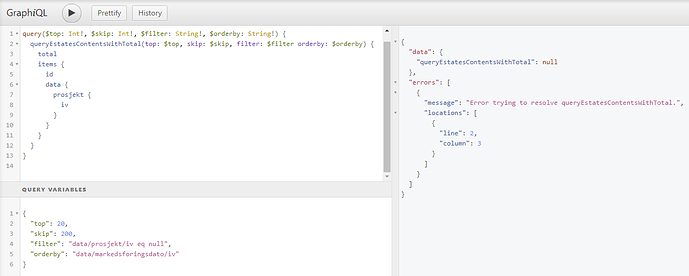

I’m working with pagination of a list of items where i’m listing out data based on a filter. The filter is set to only show items where a field does not contain a value and returns as null.

The pagination is working fine with increments of 20 on skip, but when I reach item 200 i get a null result on the query? Is this a bug in the query behavior?

Expected behavior

Minimal reproduction of the problem

Environment

- [X] Self hosted with docker

- [ ] Self hosted with IIS

- [ ] Self hosted with other version

- [ ] Cloud version

Version: [VERSION]

Browser:

- [X ] Chrome (desktop)

- [ ] Chrome (Android)

- [ ] Chrome (iOS)

- [ ] Firefox

- [ ] Safari (desktop)

- [ ] Safari (iOS)

- [ ] IE

- [ ] Edge

Others:

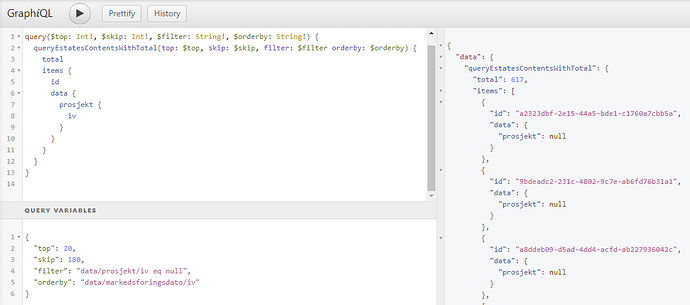

Was trying to post this also to show that it works on items beneath 200:

Does it only happen when you have a reference?

I tried to reproduce it, but I can’t. Can you test the latest version and check the logs?

I’ll tru as soon I can and see if I can’t get some more information.

Hi Sebastian,

I also have the same issue with skip. It works fine until 196, but above that number I get the following error

Error trying to resolve queryMyModelContentsWithTotal

I need to skip through more than 800. I use the latest version of Squidex selfhosted. Is there same configuration that has to be enabled?

Thanks in advanced.

It should be fine. Do you see something in the logs? Like an exception message?

The log output shows the following exception

squidex_squidex_1 | "exception": {

squidex_squidex_1 | "type": "MongoDB.Driver.MongoCommandException",

squidex_squidex_1 | "message": "Command find failed: Executor error during find command: OperationFailed: Sort operation used more than the maximum 33554432 bytes of RAM. Add an index, or specify a smaller lim

it..",

squidex_squidex_1 | "stackTrace": " at MongoDB.Driver.Core.WireProtocol.CommandUsingCommandMessageWireProtocol`1.ProcessResponse(ConnectionId connectionId, CommandMessage responseMessage)\n at MongoDB.Driv

er.Core.WireProtocol.CommandUsingCommandMessageWireProtocol`1.ExecuteAsync(IConnection connection, CancellationToken cancellationToken)\n at MongoDB.Driver.Core.Servers.Server.ServerChannel.ExecuteProtocolAsync[T

Result](IWireProtocol`1 protocol, CancellationToken cancellationToken)\n at MongoDB.Driver.Core.Operations.RetryableReadOperationExecutor.ExecuteAsync[TResult](IRetryableReadOperation`1 operation, RetryableReadCo

ntext context, CancellationToken cancellationToken)\n at MongoDB.Driver.Core.Operations.ReadCommandOperation`1.ExecuteAsync(RetryableReadContext context, CancellationToken cancellationToken)\n at MongoDB.Driver

.Core.Operations.FindCommandOperation`1.ExecuteAsync(RetryableReadContext context, CancellationToken cancellationToken)\n at MongoDB.Driver.Core.Operations.FindOperation`1.ExecuteAsync(RetryableReadContext contex

t, CancellationToken cancellationToken)\n at MongoDB.Driver.Core.Operations.FindOperation`1.ExecuteAsync(IReadBinding binding, CancellationToken cancellationToken)\n at MongoDB.Driver.OperationExecutor.ExecuteR

eadOperationAsync[TResult](IReadBinding binding, IReadOperation`1 operation, CancellationToken cancellationToken)\n at MongoDB.Driver.MongoCollectionImpl`1.ExecuteReadOperationAsync[TResult](IClientSessionHandle

session, IReadOperation`1 operation, ReadPreference readPreference, CancellationToken cancellationToken)\n at MongoDB.Driver.MongoCollectionImpl`1.UsingImplicitSessionAsync[TResult](Func`2 funcAsync, Cancellation

Token cancellationToken)\n at MongoDB.Driver.IAsyncCursorSourceExtensions.ToListAsync[TDocument](IAsyncCursorSource`1 source, CancellationToken cancellationToken)\n at Squidex.Domain.Apps.Entities.MongoDb.Conte

nts.MongoContentCollection.QueryAsync(ISchemaEntity schema, ClrQuery query, List`1 ids, Status[] status, Boolean inDraft, Boolean includeDraft) in /src/src/Squidex.Domain.Apps.Entities.MongoDb/Contents/MongoContent

Collection.cs:line 88\n at Squidex.Domain.Apps.Entities.MongoDb.Contents.MongoContentRepository.QueryAsync(IAppEntity app, ISchemaEntity schema, Status[] status, Boolean inDraft, ClrQuery query, Boolean includeDr

aft) in /src/src/Squidex.Domain.Apps.Entities.MongoDb/Contents/MongoContentRepository.cs:line 81\n at Squidex.Domain.Apps.Entities.Contents.Queries.ContentQueryService.QueryByQueryAsync(Context context, ISchemaEn

tity schema, Q query) in /src/src/Squidex.Domain.Apps.Entities/Contents/Queries/ContentQueryService.cs:line 301\n at Squidex.Domain.Apps.Entities.Contents.Queries.ContentQueryService.QueryAsync(Context context, S

tring schemaIdOrName, Q query) in /src/src/Squidex.Domain.Apps.Entities/Contents/Queries/ContentQueryService.cs:line 114\n at Squidex.Domain.Apps.Entities.Contents.Queries.QueryExecutionContext.QueryContentsAsync

(String schemaIdOrName, String query) in /src/src/Squidex.Domain.Apps.Entities/Contents/Queries/QueryExecutionContext.cs:line 85\n at GraphQL.Instrumentation.MiddlewareResolver.Resolve(ResolveFieldContext context

)\n at Squidex.Domain.Apps.Entities.Contents.GraphQL.Middlewares.<>c__DisplayClass1_0.<<Errors>b__1>d.MoveNext() in /src/src/Squidex.Domain.Apps.Entities/Contents/GraphQL/Middlewares.cs:line 51\n--- End of stack

trace from previous location where exception was thrown ---\n at Squidex.Domain.Apps.Entities.Contents.GraphQL.Middlewares.<>c__DisplayClass0_1.<<Logging>b__1>d.MoveNext() in /src/src/Squidex.Domain.Apps.Entities

/Contents/GraphQL/Middlewares.cs:line 28"

squidex_squidex_1 | },

Hi,

this is a MongoDB restriction:

If MongoDB cannot obtain the sort order via an index scan, then MongoDB uses a top-k sort algorithm. This algorithm buffers the first k results (or last, depending on the sort order) seen so far by the underlying index or collection access. If at any point the memory footprint of these k results exceeds 32 megabytes, the query will fail.

https://docs.mongodb.com/manual/reference/method/cursor.sort/

There is no quick fix, but we can try:

- To increase the buffer size (dangerous)

- Add a feature to allow custom indexes (very difficult)

- Rewrite the query (I am not sure if it can be rewritten in general, e.g. fetch ids first, then use ids to fetch documents)