Hi,

thanks for your mock-ups. Well done.

Nevertheless I think it is not the best idea.

Current State

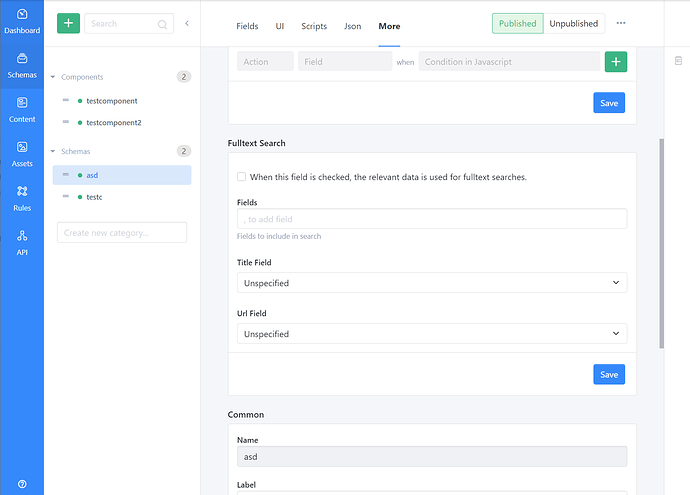

Let my explain the current state in Squidex first. At the moment Squidex has a full text implementation. The implementation is very basic and has been migrated from a Lucene backed implementation to a implementation using MongoDb or ElasticSearch. At the moment all text fields are added to an index and the ElasticSearch implementation also considers the different languages for localized fields.

The Lucene implementation produced better results than the MongoDB implementation but it was buggy and hard to maintain. Therefore the implementation has been shifted.

What is the problem?

I am not sure how familiar you are with full text indexes, but the implementation works most of the time with a so called reverse index. Lets consider you have two documents.

| Id | Texts |

| 1 | Hello User |

| 2 | Hello, how is it going? |

The full text index splits the text into its words (or word stems). Then it associated the ids to the words. Because some words like and and used so often these words are added to a blacklist, so called stopwords and no added to the index.

So after this process is done you get the following results

| Word | Ids |

| Hello | 1, 2 |

| User | 1 |

| Go | 3 |

In this case how, is and it are treated as stopwords and going is reduced to its stem go.

Problem 1: A lot to configure

To make the full text work the best way a lot of settings have to be made:

- Stemming and word splitting (tokenization) differes between the languages, so per field you have to decide which language should be used.

- Stop words also differ per language and you have to define per field which stopwords should be used. But sometimes you also want to have custom stopwords. For example if you have a travel port you wanna exclude words like hotel and flight from your results, because they are basically part of every content item and it would produce the wrong results.

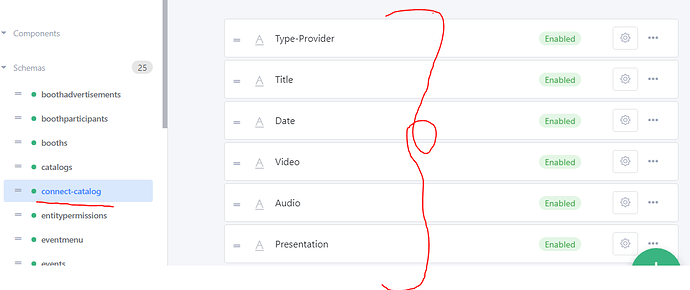

- Some fields are more important than others, for example the title is more important than then the text, so you wanna add a weight to some fields.

- Some fields contain words that are hard to understand for the search engine. For example the IATA code for airports. You wanna treat these fields differently.

- You also wanna configure synonyms, so that the word does not have to match and approximity search so that typos are acceptable.

There are more settings and I am not an expert for full text search, but you can have a look at the documentation of Algolia or Elastic Search to understand all the different options.

Without deep understanding of these parameters you will not get good results. There is no automatic solution that does everything for you.

So when we really wanna integrate full text search into Squidex we have to make these parameters and configuration options available to the developer.

But we have to consider the different implementations and have to study what the different solutions offer. Just a list of servers and technologies:

- Lucene based (a Java full text search engine)

- Lucene itself

- Elastic Search

- Solr

- Algolia (SaaS)

- Databases (most databases have basic full text support today)

- MeiliSearch

Then we have 2 options:

- Support all parameters that are available by at least one of these engines and make clear why this parameter cannot be used in the current installation.

- Support only common parameters.

We also have to implement processes like reindexing because it might be necessary to index all your content items again after you made a change to the stopwords.

Problem 2: Document transformation

The content items itself are not the best representation for a full text index. Lets come back to our original sample with the travel portal. A travel offer has a reference to the airport and the destination and the hotel. In the content these references are only represented as IDs and provide no value for the search engine. So we must also configure which fields of referenced items should be added to the document for the full text search engine.

After all it is a lot of effort to bring a very good fulltext solution and I am not sure if it would provide enough value.